Know Before You Go: Tackling CJK Language Challenges in Cross-Border Matters

Challenges of eDiscovery for FCPA Investigations in Japan

July 9, 2018

New to Asian Language eDiscovery? Challenges posed by CJK

July 25, 2018

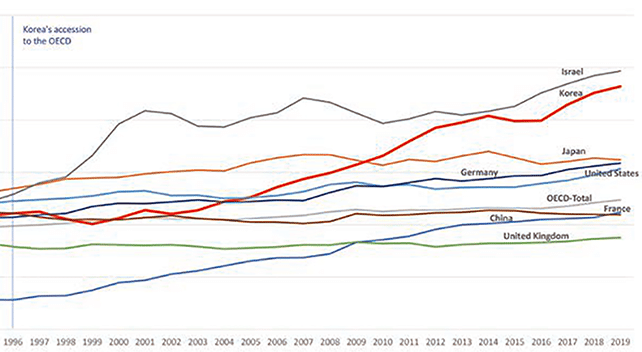

The two largest economies in Asia–China and Japan–historically have seen far lower volumes of eDiscovery than the U.S. However, with a growing global economy, increasing global litigation, investigations, and M&A activity, the expansion of national cybersecurity and data protection laws, and more vigilant regulatory oversight and enforcement, the Asia Pacific region is experiencing the fastest eDiscovery growth in the world.

Even the most experienced legal veterans in the U.S. are handling cross-border matters in Asia with care, given the technical challenges such as multi-byte and CJK data, cultural differences, dissimilar legal frameworks and understanding of eDiscovery, as well as special considerations, like state secrets laws. Since there is no “one-size-fits-all” eDiscovery approach to any country or region, in Asia, legal teams require education on these issues, well-thought out and often customized plans, and preparation.

In our “Know Before You Go” blog series, we will cover the gamut of Asia cross-border issues and best practices approaches for U.S. legal teams to consider.

First, we’ll discuss the technical challenges posed by multi-language documents with multi-byte characters, including Chinese, Japanese and Korean (CJK), and how eDiscovery experts with deep CJK knowledge can support even the most experienced cross-border legal teams.

Understanding CJK’s Unique Characteristics and Challenges

DOJ and SEC enforcement actions under the FCPA and antitrust cases involving multinational corporations are on the rise, and it’s increasingly likely that document collections subject to review will be a mix of multiple languages, including CJK, English and perhaps others. As we wrote about in an earlier blog, CJK documents pose specific challenges in eDiscovery:

- Different alphabets: CJK languages use different alphabets, often have different grammatical constructions, and in some cases characters may be entirely pictographic. Many times, in fact, an entire phrase is captured in just a single character. Thus, search, review and analysis using standard review tools that are not equipped to capture the different alphabets can be difficult, if not impossible.

- Language variations: In Asian language writing, differences in tone between casual and formal, regional language variations or even the use of various keyboard styles can actually affect the meaning of a phrase or sentence. For example, Japanese has three written language systems: hiragana, katakana and kanji. The first two are phonetic (like English), where each character represents a syllable. Kanji, on the other hand, is a logographic system that uses characters that represent a word or phrase, common in written Chinese. For other languages, such as Vietnamese, it is also common for people to type out the phonetic sound of characters using the English alphabet, which can run into intonation problems that can result in vastly different meaning than the intended word or phrase.

- Tokenization: In English, spaces indicate word segments. Tokenization, the process of segmenting characters to define words and phrases, is sophisticated in western eDiscovery review systems and is critical for search and analytics. It’s far more difficult with CJK content, however, which is not segmented into words. Even differences in approaches to segmenting groups of characters can result in variances in meaning based on segmentation, necessitating recognition and understanding of each logographic character and how to break them up to capture intended meaning. (All too often, the result looks like ☐ ☐ ☐ ☐ ☐ displayed in review software.)

- Character Encoding: As we described in a previous blog on CJK character encoding challenges, content is composed of a sequence of characters that represent letters of the alphabet. Computers store this content electronically in the form of numeric values called bytes. Character encoding is the term to describe the “key” used to convert a sequence of bytes into characters. Without this key, it is nearly impossible to read any content created other than the most basic English text, thus potentially preventing data from being found in search.

The problem is that the de facto standard for character encoding for Western languages, Unicode, does not always accommodate Asian languages, and many contain multiple encoding standards. This is because each nation has its own distinct code sets, some of which—Japan in particular—utilize multiple code sets). Many email programs still used in Asia, including “Becky” email or “Thunderbird,” are examples unusual file types that are unrecognizable by western tools. In some cases, .MSG email files may have Unicode-compliant main text, but metadata (such as email headers) may be non-Unicode compliant and would convert into nonsense characters. Experts in CJK discovery can recognize multiple encoding standards and convert content to Unicode.

Workflow Options

Workflows to address the unique characters of Asian languages can range from requiring all documents to be reviewed by a bilingual (or multi-lingual) team, conducting concurrent English/Chinese/Japanese or other language reviews, and having specific workflows for translations. These options can be very complex and costly if not considered before the start of a review.

When you know that a cross-border case will involve CJK data, planning early can go a long way: look for specialized technology designed to handle the nuances of CJK languages along with an experienced team with deep knowledge of CJK languages and their respective data structures in an eDiscovery context.